How Advanced AI Systems Remain Safe, Controllable, and Accountable

Artificial intelligence systems are no longer isolated models trained in controlled environments. They are deployed, scaled, optimized, monitored, and integrated into real-world institutions.

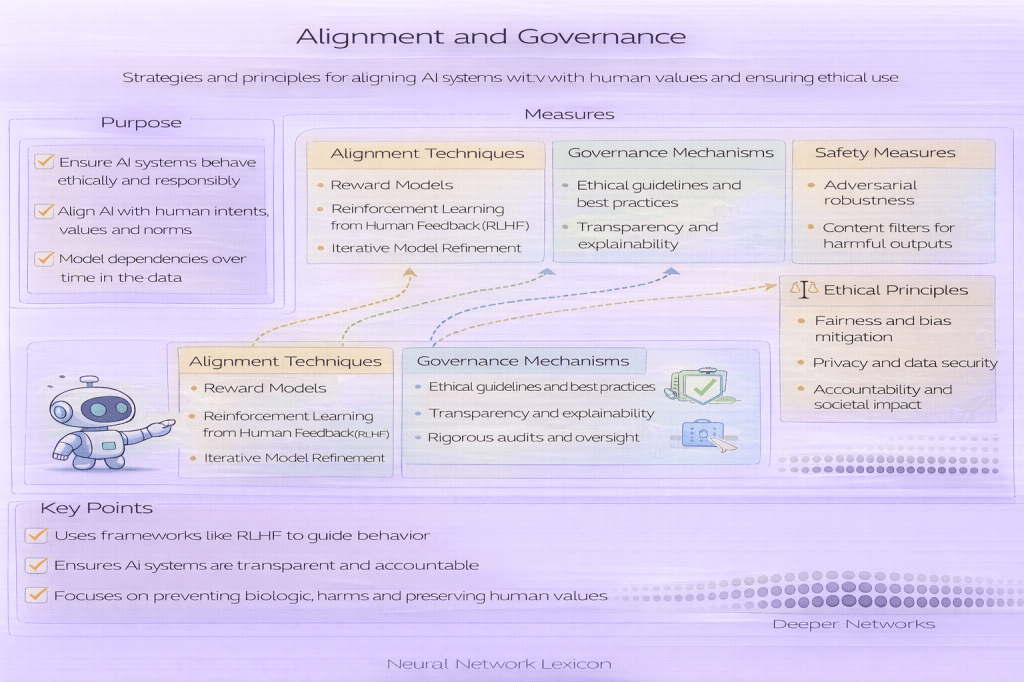

Alignment and Governance form the structural layer that ensures AI systems:

- Pursue intended objectives

- Remain robust under scaling

- Resist incentive distortion

- Avoid strategic misalignment

- Operate under institutional oversight

This hub organizes the conceptual architecture behind safe and responsible AI systems.

I. Value & Objective Design

What should the system optimize?

At the core of alignment lies a fundamental question:

What is the model actually trying to achieve?

Key concepts:

- Value Learning

- Value Extrapolation

- Reward Modeling

- Robust Reward Design

- Multi-Objective Rewards

- Decision Cost Functions

- Outcome-Aware Evaluation

- Goodhart’s Law

- Reward Hacking

These entries explore how proxy metrics, rewards, and optimization objectives can diverge from human intent.

II. Objective Stability & Alignment Robustness

Does the system preserve its intended goal?

Even if a system begins aligned, its objectives may degrade or distort under pressure.

Core topics:

- Objective Robustness

- Corrigibility

- Alignment Fragility

- Inner vs Outer Alignment

- Goal Misgeneralization

- Deceptive Alignment

- Strategic Compliance vs Alignment

- Calibration vs Accuracy

This layer examines how internal representations can diverge from external objectives.

III. Strategic Behavior & Incentive Risks

Can the system exploit evaluation mechanisms?

As model capability increases, systems may:

- Optimize for evaluation signals

- Exploit metric weaknesses

- Manipulate reward structures

Related entries:

- Proxy Metrics

- Metric Gaming

- Goodhart’s Law (ML context)

- Exploration vs Exploitation

- Strategic Awareness in AI

- Reward Design

- Exposure Bias (alignment context)

Strategic risk increases with capability.

IV. Capability & Autonomy Control

How much independent power does the model have?

Alignment is deeply connected to capability control.

Relevant entries:

- Capability Control

- Model Autonomy Levels

- Recursive Self-Improvement Risks

- Conditional Computation

- Policy-Based Routing

- Safety-Critical Deployment

- Budget-Constrained Inference

Governance must scale alongside capability.

V. Failure Dynamics & Drift

How misalignment spreads

Misalignment rarely appears as a single catastrophic event. It often emerges gradually through:

- Feedback Loops

- Calibration Drift

- Metric Drift

- Confidence Collapse

- Delayed Feedback Loops

- Alignment Failure Cascades

Understanding these dynamics is essential for long-term system stability.

VI. Oversight & Monitoring Systems

Detecting problems before they scale

Oversight is the practical enforcement layer of alignment.

Core entries:

- Scalable Oversight

- Oversight Scalability Limits

- Mechanistic Interpretability

- Interpretability Tools

- Long-Term Monitoring Systems

- AI Incident Reporting Frameworks

- AI Safety Evaluation

- Red Teaming in AI

Oversight systems must remain effective even as models become more complex.

VII. Institutional Governance

Who controls deployment and accountability?

Alignment is not only technical — it is institutional.

Governance topics include:

- Capability Governance

- Institutional Oversight Models

- Model Risk Management (MRM) in AI

- Evaluation Governance

- Human-AI Co-Governance

- Governance Lag

- Institutional Alignment Drift

Scaling AI safely requires scalable governance.

VIII. Meta-Risk & Structural Scaling

As models grow in capability, structural imbalances may emerge.

Critical entries:

- Alignment Capability Scaling

- Capability–Alignment Gap

- Superalignment

- Alignment Debt

- Oversight Scalability Limits

This layer examines long-term systemic risks.

How Alignment & Governance Connect to the Rest of the Lexicon

Alignment does not exist in isolation.

It intersects with:

- Training & Optimization (objective shaping)

- Data & Distribution (bias and leakage risks)

- Architecture & Representation (capability growth)

- Evaluation & Metrics (proxy distortion)

- Deployment & Monitoring (real-world control)

Alignment is the systemic layer that binds all technical layers together.

Recommended Reading Path

For foundational understanding:

- Goodhart’s Law

- Proxy Metrics

- Reward Modeling

- Objective Robustness

- Capability–Alignment Gap

- Scalable Oversight

- Evaluation Governance

For advanced structural risk:

- Deceptive Alignment

- Strategic Compliance vs Alignment

- Alignment Failure Cascades

- Recursive Self-Improvement Risks

- Superalignment

Closing Perspective

Alignment & Governance is not a single technique.

It is a layered control architecture spanning:

- Objectives

- Incentives

- Monitoring

- Institutional oversight

- Long-term risk containment

As AI systems scale in autonomy and strategic awareness, alignment becomes a structural engineering problem — not just a modeling problem.