Short Definition

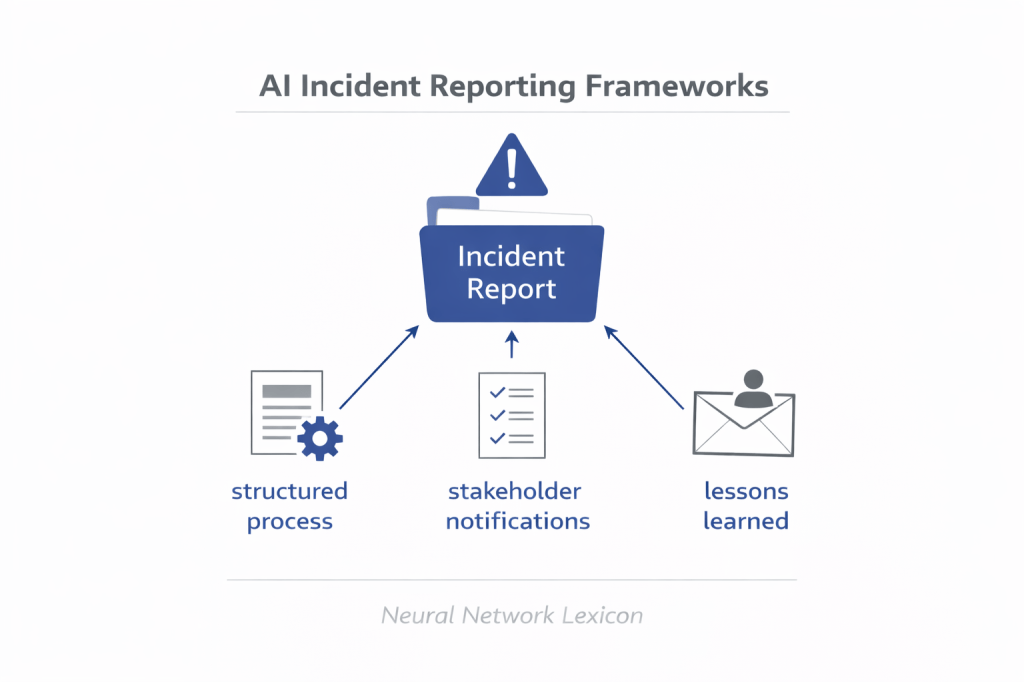

AI Incident Reporting Frameworks are structured systems for documenting, escalating, analyzing, and responding to failures or harmful events involving AI systems.

Definition

AI Incident Reporting Frameworks are formal governance mechanisms designed to capture and manage incidents where AI systems behave unexpectedly, cause harm, violate policies, or exhibit alignment failures. These frameworks define how incidents are classified, documented, investigated, escalated, and publicly communicated.

Incidents must be institutionalized—not ignored.

Why It Matters

AI systems:

- Operate in dynamic environments.

- Interact with real users.

- May exhibit rare but harmful failures.

- Can cause cascading systemic effects.

Without structured reporting:

- Failures are under-documented.

- Root causes remain unidentified.

- Lessons are lost.

- Alignment debt increases.

Transparency enables improvement.

Core Objectives

AI incident reporting frameworks aim to:

- Detect and log harmful events

- Standardize incident classification

- Trigger escalation procedures

- Preserve forensic data

- Enable root cause analysis

- Support regulatory compliance

- Build institutional memory

Learning from failure requires documentation.

Minimal Conceptual Illustration

Incident Occurs

↓

Incident Logging

↓

Severity Classification

↓

Investigation & Root Cause Analysis

↓

Mitigation & Policy Update

↓

Documentation & Reporting

Failure becomes feedback.

Types of AI Incidents

1. Performance Failures

Severe degradation under distribution shift.

2. Alignment Failures

Objective misgeneralization or policy violations.

3. Safety Violations

Harmful or restricted content generation.

4. Operational Failures

System outages, latency spikes, cascading errors.

5. Governance Failures

Deployment without proper validation.

Incidents span technical and institutional domains.

Severity Classification

Incidents may be categorized as:

- Low impact

- Moderate impact

- High impact

- Critical / catastrophic

Severity determines escalation urgency.

Relationship to Model Risk Management (MRM)

MRM provides:

- Risk classification standards.

- Escalation pathways.

- Independent review processes.

Incident reporting operationalizes MRM during real-world failures.

Relationship to Long-Term Monitoring Systems

Monitoring systems:

- Detect anomalies and drift.

Incident frameworks:

- Convert anomalies into structured response actions.

Detection without response is insufficient.

Relationship to Alignment Failures Framework

Incident reporting:

- Documents specific cases.

Alignment Failures framework:

- Analyzes systemic patterns.

Case documentation supports theoretical refinement.

Key Design Principles

1. Transparency

Clear documentation of failure.

2. Non-Punitive Reporting Culture

Encourages early detection.

3. Independent Review

Avoid internal bias suppression.

4. Auditability

Maintain traceable decision records.

5. Continuous Improvement

Integrate lessons into reward design and governance.

Accountability must be structured.

Failure Modes of Incident Frameworks

- Underreporting

- Delayed escalation

- Blame avoidance

- Lack of transparency

- Incident normalization

- Regulatory evasion

Frameworks must resist institutional inertia.

Regulatory Dimension

Emerging regulatory trends include:

- Mandatory AI incident disclosure

- External audit reporting

- Public transparency requirements

- Risk disclosure statements

Incident reporting is becoming legally required.

Strategic Importance

AI incident reporting:

- Reduces systemic risk.

- Prevents recurrence.

- Supports regulatory trust.

- Protects institutional credibility.

- Accelerates alignment learning.

Failure becomes an asset when analyzed.

Long-Term Perspective

As AI systems grow:

- Incidents may become more complex.

- Root causes may span multiple subsystems.

- Cascading failures may increase.

Structured reporting becomes more critical under scale.

Summary Characteristics

| Aspect | AI Incident Reporting Frameworks |

|---|---|

| Focus | Post-failure governance |

| Scope | Technical + institutional |

| Lifecycle phase | Post-deployment |

| Risk addressed | Recurrent systemic failure |

| Alignment relevance | High |