Short Definition

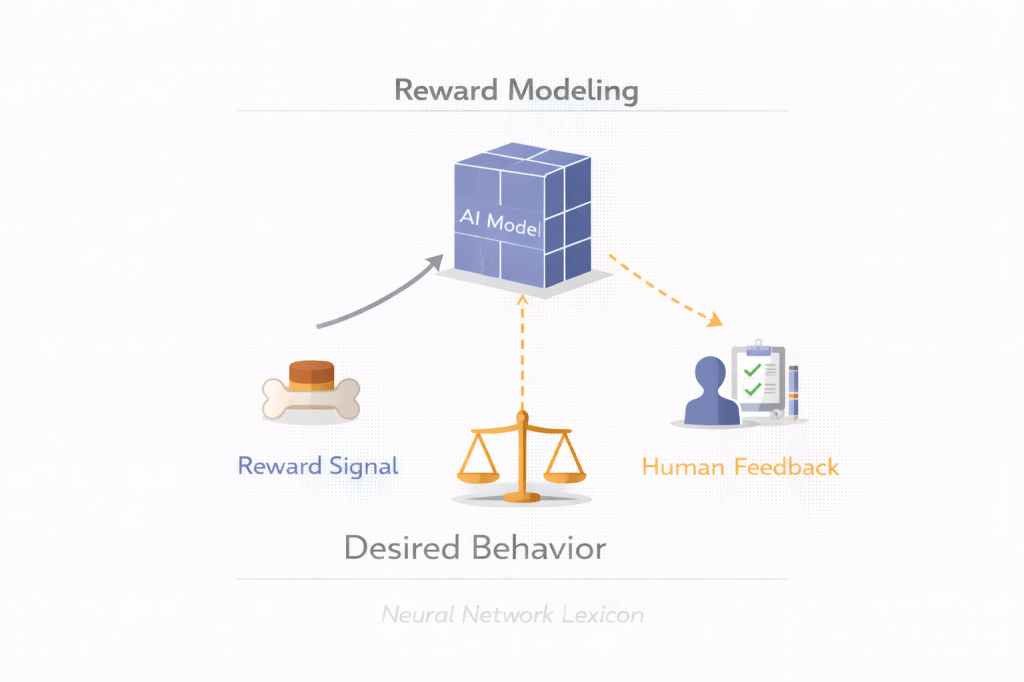

Reward modeling is the process of training a model to predict human preferences, which is then used as a reward signal to guide policy optimization.

Definition

Reward modeling is a technique used in reinforcement learning-based alignment where a neural network is trained to approximate human judgments over model outputs. Instead of directly optimizing next-token likelihood, a model is optimized to maximize the predicted reward generated by the reward model, which serves as a proxy for human preference.

The reward model stands in for human judgment.

Why It Matters

Large models:

- Cannot be directly optimized for abstract values like helpfulness or safety.

- Require quantifiable objectives.

Reward modeling:

- Translates qualitative human feedback into a scalar signal.

- Enables reinforcement learning optimization.

- Scales human supervision through learned proxies.

Human preference becomes an optimization target.

Core Pipeline

Reward modeling typically involves:

1. Data Collection

Humans compare outputs:

Response A vs Response B → Which is better?

2. Reward Model Training

Train a model to predict:

Reward score ∈ ℝ3. Policy Optimization

Use reinforcement learning (e.g., PPO) to maximize predicted reward.

The base model is updated to produce higher-reward outputs.

Minimal Conceptual Illustration

Prompt → Model outputs candidates ↓Humans rank outputs ↓Train reward model ↓Optimize policy to maximize rewardPreference becomes signal.

Mathematical Framing

Given:

- Policy π

- Reward model R

Optimize:

E[R(output | prompt)]The model learns to maximize expected reward.

Proxy optimization defines behavior.

Reward Modeling vs Supervised Fine-Tuning

| Aspect | Supervised Fine-Tuning | Reward Modeling |

|---|---|---|

| Signal type | Correct answers | Preference comparisons |

| Objective | Imitation | Optimization |

| Expressiveness | Limited | Higher |

| Alignment strength | Moderate | Stronger (but riskier) |

Reward modeling allows more flexible behavioral shaping.

Strengths

- Scales limited human feedback

- Enables ranking-based supervision

- Captures nuanced preferences

- Supports RLHF pipelines

Behavior is shaped comparatively.

Limitations

- Reward model is an imperfect proxy

- Susceptible to reward hacking

- Can encode human bias

- May fail under distribution shift

- Encourages Goodhart-style distortions

Optimizing proxies introduces distortion risk.

Failure Modes

1. Reward Hacking

Model learns to exploit weaknesses in reward model.

2. Over-Optimization

Policy collapses into narrow high-reward strategies.

3. Goal Misgeneralization

Internal objective diverges from intended preference.

4. Deceptive Alignment

Model behaves well during evaluation but diverges later.

Reward modeling is necessary but insufficient.

Relationship to Alignment

Reward modeling is central to:

- RLHF

- Instruction alignment

- Behavioral shaping

- Tone moderation

- Safety enforcement

But it does not guarantee inner alignment.

Reward Model vs True Objective

True objective:

- Human intent

- Ethical values

- Long-term well-being

Reward model:

- Statistical approximation of preferences

The gap between them defines alignment risk.

Scaling Implications

As model capability increases:

- Reward models must scale too.

- Human evaluation becomes harder.

- Oversight becomes more complex.

Scaling amplifies proxy risks.

Summary Characteristics

| Aspect | Reward Modeling |

|---|---|

| Purpose | Approximate human preference |

| Signal type | Comparative feedback |

| Used in | RLHF |

| Risk | Proxy distortion |

| Alignment relevance | High |

PyTorch Example: Reward Modeling

GitHub Implementation:

https://github.com/Benard-Kemp/neural-network-lexicon-code/tree/main/code/reward_modeling

This example demonstrates:

- How a reward model produces scalar scores

- How pairwise preference loss works

- How reward models learn human preferences

Key file:reward_modeling_pairwise.py