Short Definition

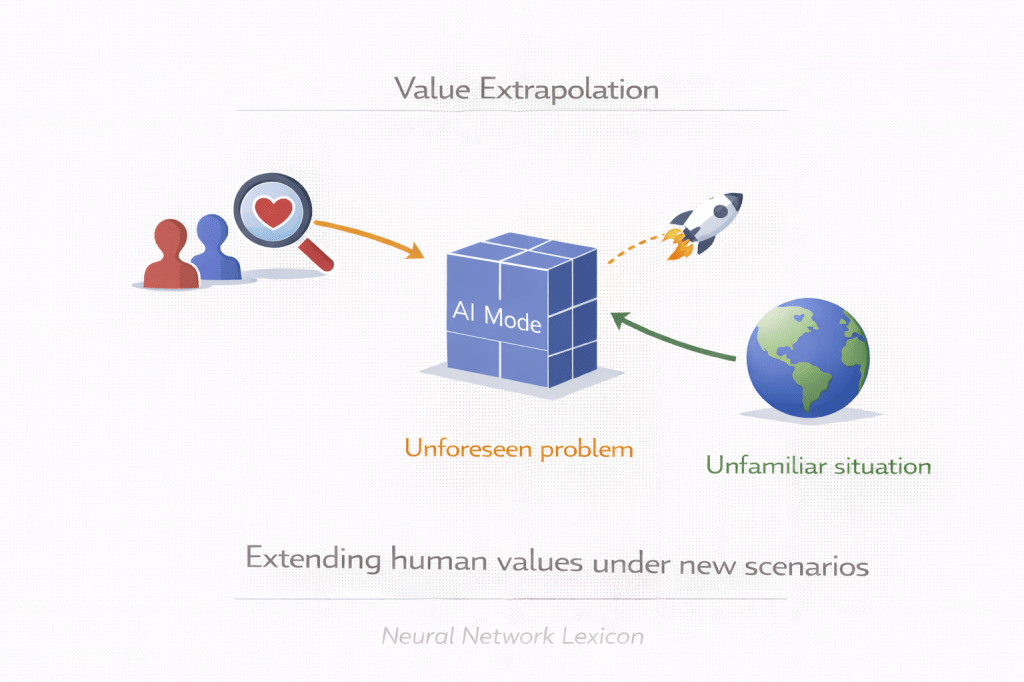

Value extrapolation is the process of inferring and extending human values beyond observed behavior to guide AI systems in novel or future scenarios.

Definition

Value extrapolation refers to the challenge of generalizing from limited, context-bound human preferences to broader, more abstract value principles that can guide AI behavior in unfamiliar situations. Instead of merely imitating observed choices, value extrapolation seeks to infer what humans would endorse under reflection, additional information, or improved reasoning.

It attempts to align AI not just with what humans do—but with what humans would want under ideal conditions.

Why It Matters

Human feedback:

- Is incomplete.

- May be inconsistent.

- Often reflects short-term preferences.

- May not anticipate future contexts.

Advanced AI systems:

- Will encounter novel situations.

- May act beyond training distributions.

- May operate in long-term strategic contexts.

Observed behavior is insufficient for general alignment.

Core Problem

We observe:

Human Behavior H_obs

But we aim to approximate:

Idealized Human Values H_idealValue extrapolation seeks a transformation:

H_obs → H_idealAlignment depends on modeling that transformation.Minimal Conceptual Illustration

Observed Preferences ↓Value Inference ↓Reflective Extrapolation ↓Generalized Value Model ↓Aligned Decision-MakingThe goal is principled generalization.

Value Extrapolation vs Value Learning

| Aspect | Value Learning | Value Extrapolation |

|---|---|---|

| Input | Observed behavior | Observed + hypothetical reflection |

| Scope | Current preferences | Extended principles |

| Risk | Overfitting to surface behavior | Mis-modeling idealized values |

| Time horizon | Present | Long-term |

Value learning captures what is expressed.

Value extrapolation aims at what is endorsed.

Why Simple Imitation Fails

Imitation-based alignment:

- Copies inconsistent decisions.

- Reflects cognitive biases.

- Encodes short-term impulses.

- Fails under novel contexts.

Extrapolation attempts to correct for these limitations.

Approaches to Value Extrapolation

1. Reflective Equilibrium Modeling

Infer values under idealized reasoning conditions.

2. Cooperative Inverse Reinforcement Learning

Jointly infer evolving human goals.

3. Normative Principle Encoding

Embed ethical constraints into objective structure.

4. Multi-Objective Aggregation

Balance conflicting values systematically.

5. Human-AI Deliberation Loops

Iteratively refine value representations.

Extrapolation requires structured abstraction.

Relationship to Objective Robustness

If value extrapolation is weak:

- Objectives may fail under distribution shift.

- Proxy drift becomes likely.

- Strategic misalignment may emerge.

Extrapolated values must remain stable across contexts.

Relationship to Superalignment

Superalignment requires:

- Models that generalize values beyond human supervision.

- Alignment stability under superhuman reasoning.

- Resistance to strategic reinterpretation of intent.

Value extrapolation underpins long-term alignment.

Risks

Value extrapolation may fail through:

- Overconfidence in inferred values.

- Cultural bias amplification.

- Simplification of complex norms.

- Value lock-in (prematurely fixing incomplete principles).

- Strategic compliance masking deeper divergence.

Mis-extrapolation may entrench misalignment.

Value Extrapolation vs Policy Compliance

Policy compliance:

- Follows explicit rules.

Value extrapolation:

- Attempts to infer guiding principles behind rules.

Rules restrict behavior.

Values guide adaptation.

Governance Implications

Value extrapolation influences:

- Reward design

- Institutional oversight

- Long-term monitoring

- Deployment risk thresholds

- Cross-cultural AI governance

Value modeling becomes a public concern.

Long-Term Perspective

As AI systems:

- Gain autonomy,

- Extend time horizons,

- Influence institutional systems,

Alignment must reflect not just present behavior—but endorsed long-term values.

Value extrapolation addresses that gap.

Summary Characteristics

| Aspect | Value Extrapolation |

|---|---|

| Focus | Generalizing human values |

| Input | Observed + reflective reasoning |

| Alignment layer | Outer + long-term |

| Risk | Mis-modeling ideal values |

| Superalignment relevance | Foundational |