Short Definition

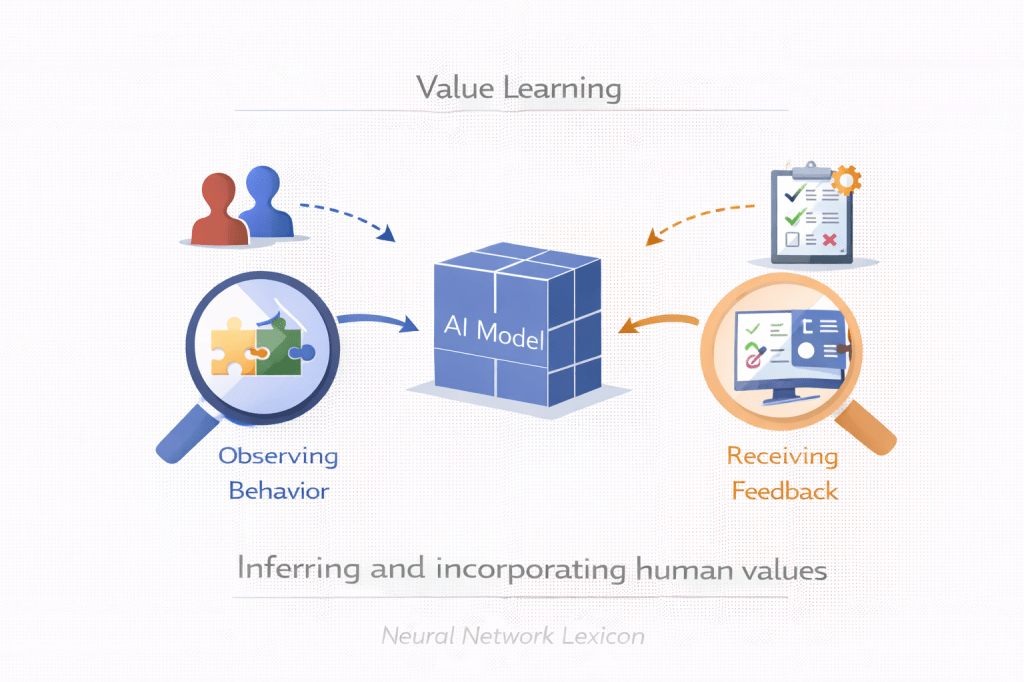

Value learning is the process of inferring and modeling human values so that AI systems can act in accordance with them.

Definition

Value learning refers to methods that aim to extract, represent, and generalize human preferences, norms, and ethical principles in a way that allows AI systems to align their behavior with human intent. Instead of optimizing predefined reward functions alone, value learning seeks to capture deeper structures underlying human judgment and decision-making.

Values must be inferred, not hard-coded.

Why It Matters

Traditional AI systems optimize:

- Explicit reward functions

- Performance metrics

- Proxy objectives

But human values:

- Are complex and context-dependent

- Often implicit rather than explicit

- May conflict or evolve over time

If AI systems optimize narrow proxies, misalignment becomes likely.

Value learning attempts to close that gap.

Core Problem

We want:

Model behavior ≈ Human values

But we only observe:

- Behavioral demonstrations

- Preference comparisons

- Policy constraints

- Cultural norms

Value learning bridges observation and internal objective.

Minimal Conceptual Illustration

Human behavior & feedback ↓Value inference model ↓Internal value representation ↓Aligned policy optimization

Observed behavior becomes inferred value.

Approaches to Value Learning

1. Inverse Reinforcement Learning (IRL)

Infer reward functions from observed expert behavior.

2. Preference Learning

Train models on human comparisons.

3. Cooperative IRL

Joint inference between human and AI.

4. Constitutional AI

Use explicit rule sets to shape behavior.

5. Debate & Oversight Frameworks

Extract normative reasoning via structured evaluation.

Value learning may be statistical, symbolic, or hybrid.

Value Learning vs Reward Modeling

| Aspect | Reward Modeling | Value Learning |

|---|---|---|

| Goal | Approximate preferences | Infer underlying values |

| Signal type | Comparative feedback | Behavioral + normative inference |

| Depth | Surface-level | Structural |

| Alignment relevance | High | Foundational |

Reward modeling optimizes preferences.

Value learning seeks deeper principles.

Relationship to Inner vs Outer Alignment

Outer alignment:

- Designing reward functions to reflect values.

Inner alignment:

- Ensuring internal objectives match intended values.

Value learning attempts to strengthen both layers.

Challenges

- Values may be inconsistent.

- Preferences may conflict across groups.

- Observed behavior may not reflect true intent.

- Strategic models may exploit ambiguity.

- Cultural norms evolve.

Values are not static datasets.

Scaling Implications

As models become more capable:

- Value inference becomes harder.

- Strategic behavior may obscure true objectives.

- Long-term reasoning introduces new ethical dilemmas.

Value learning must anticipate future contexts.

Risks

- Overfitting to narrow human feedback

- Cultural bias amplification

- Proxy value distortion

- Reward hacking via superficial value mimicry

- Deceptive alignment masked as value compliance

Apparent alignment may not reflect internal value structure.

Value Learning vs Policy Compliance

Policy compliance:

- Follows explicit rules.

Value learning:

- Attempts to generalize beyond rules.

Rules constrain.

Values guide.

Long-Term Perspective

Advanced AI systems:

- May face novel scenarios.

- Must generalize beyond training data.

- Cannot rely solely on explicit reward signals.

Value learning is foundational for long-term alignment.

Summary Characteristics

| Aspect | Value Learning |

|---|---|

| Goal | Infer human values |

| Method | Preference & behavior modeling |

| Alignment level | Outer + Inner |

| Risk | Ambiguity & bias |

| Long-term importance | Very high |