Short Definition

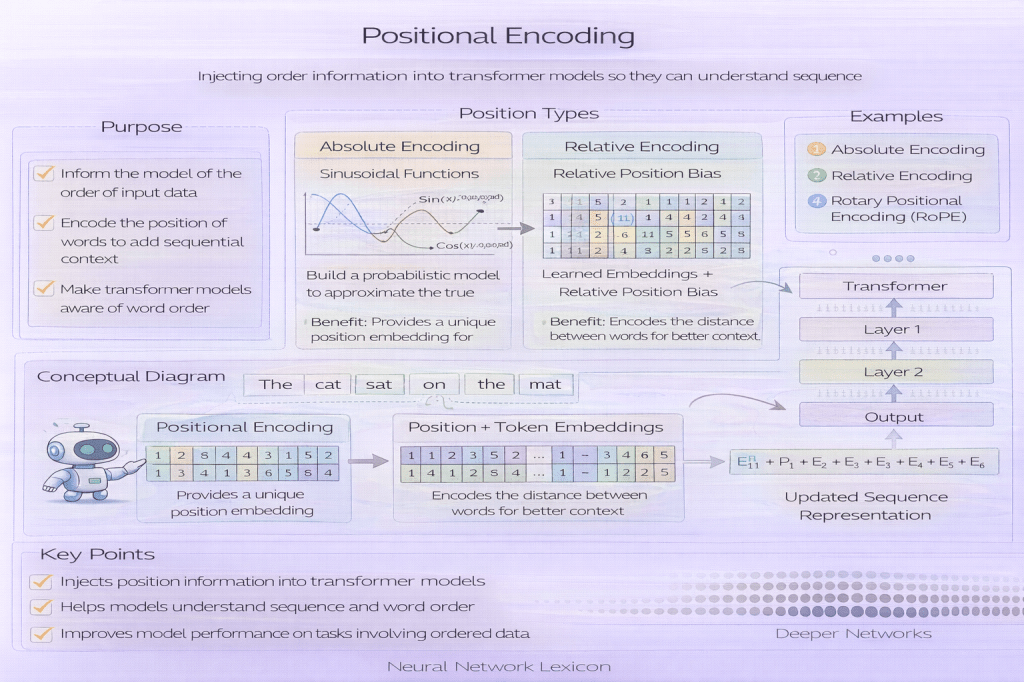

Positional encoding is a method used in Transformer models to inject information about token order into input representations.

Definition

Transformers process sequences in parallel and lack inherent recurrence or convolution to encode order. Positional encoding adds explicit position information to token embeddings so the model can distinguish between different token positions within a sequence.

Without position, attention is permutation-invariant.

Why It Matters

Self-attention treats inputs as a set:

- it does not inherently know which token comes first

- it cannot distinguish reordered sequences

For example:

“The cat chased the dog”

“The dog chased the cat”

Without positional information, these may look identical to pure attention.

Order defines meaning.

Core Mechanism

The original Transformer introduced sinusoidal positional encodings:

For position pos and dimension i:

PE(pos, 2i) = sin(pos / 10000^(2i/d_model))PE(pos, 2i+1) = cos(pos / 10000^(2i/d_model))These values are added to token embeddings:

InputEmbedding + PositionalEncodingPosition becomes part of the representation.

Minimal Conceptual Illustration

Token Embedding: [0.2, 0.8, 0.5, ...]Positional Encoding: [0.1, 0.3, 0.9, ...]Final Input: [0.3, 1.1, 1.4, ...]Position modifies meaning.

Why Sinusoidal?

Sinusoidal encodings:

- allow extrapolation to longer sequences

- encode relative distances implicitly

- provide smooth, continuous positional variation

Relative position emerges from phase differences.

Learned Positional Embeddings

An alternative approach:

- learn position embeddings directly

- treat position like a token

This often improves performance but:

- may not generalize beyond training length

- depends on maximum sequence size

Learned encodings trade flexibility for adaptability.

Absolute vs Relative Position Encoding

| Type | Description |

|---|---|

| Absolute | Each position has a unique embedding |

| Relative | Encodes distance between tokens |

| Rotary (RoPE) | Rotates embeddings to encode position |

| ALiBi | Adds linear bias to attention scores |

Modern architectures increasingly use relative methods.

Relationship to Self-Attention

Self-attention computes similarity:

Attention(Q, K, V)Positional encoding ensures:

- queries and keys incorporate position

- attention weights reflect order

- temporal relationships are learnable

Position influences attention scores.

Impact on Long-Sequence Modeling

Positional encoding:

- determines extrapolation behavior

- affects stability at longer sequence lengths

- influences model scaling

Poor positional handling limits generalization.

Common Pitfalls

- forgetting positional encoding entirely

- using fixed maximum lengths incorrectly

- assuming sinusoidal always outperforms learned

- mismanaging padding positions

Position is subtle but critical.

Positional Encoding vs Recurrence

| Aspect | Recurrence (RNN) | Positional Encoding |

|---|---|---|

| Order awareness | Inherent | Explicitly added |

| Parallelism | No | Yes |

| Memory mechanism | Hidden state | Attention weights |

Transformers externalize order instead of encoding it sequentially.

Practical Considerations

When implementing positional encoding:

- ensure alignment with embedding dimension

- handle padding tokens carefully

- consider relative encodings for long sequences

- test extrapolation performance

Order handling affects scaling behavior.

Summary Characteristics

| Aspect | Positional Encoding |

|---|---|

| Purpose | Inject order information |

| Used in | Transformers |

| Default type | Sinusoidal (original paper) |

| Modern variants | Relative, Rotary, ALiBi |

| Critical for | Long-sequence modeling |

Related Concepts

- Architecture & Representation

- Attention Mechanism

- Self-Attention

- Multi-Head Attention

- Transformer Architecture

- Representation Learning

- Scaling Laws