Short Definition

Residual connections are skip pathways that add an input directly to the output of a transformation, enabling stable learning by preserving information and gradients across layers.

Definition

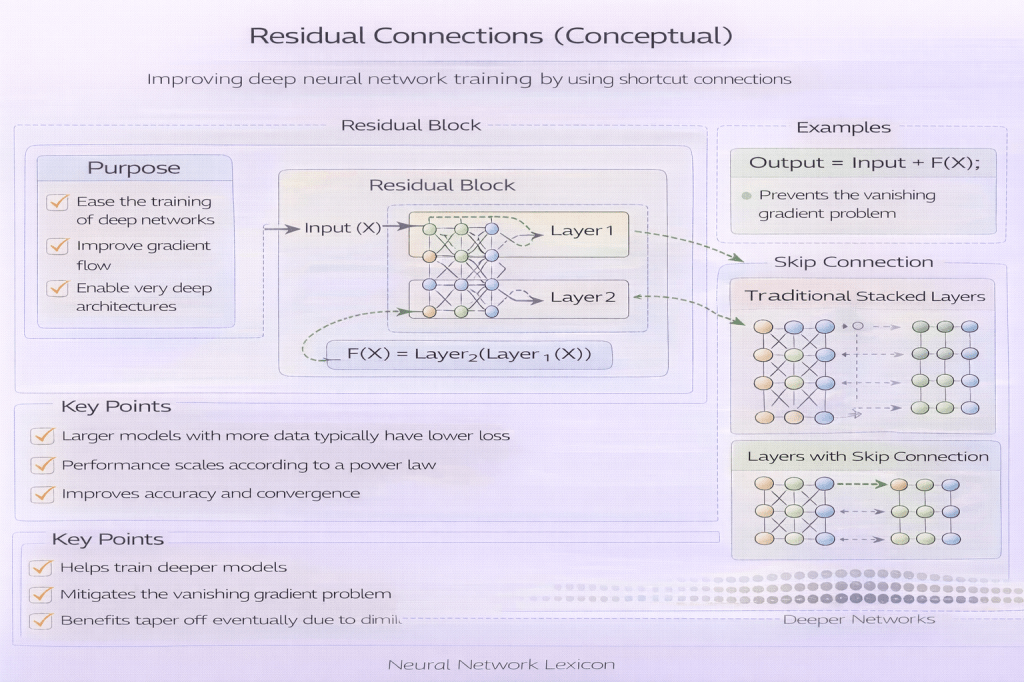

A residual connection introduces an identity shortcut that bypasses one or more layers and is combined—typically via addition—with the transformed signal. Instead of learning a full mapping, the network learns a residual: how the output should differ from the input.

Learning corrections is easier than learning replacements.

Why It Matters

As networks deepen, optimization becomes difficult due to vanishing gradients and representational degradation. Residual connections mitigate these issues by:

- improving gradient flow

- stabilizing optimization

- allowing layers to learn near-identity mappings

- enabling much deeper architectures

Depth becomes usable.

Core Mechanism

A residual connection computes:

y = x + F(x)

where:

xis the input (identity path)F(x)is the learned transformation

The shortcut preserves signal continuity.

Minimal Conceptual Illustration

Input ───────────┐ ┌─ Layers ─┤→ Add → Output └──────────┘Identity Mapping

Residual connections preserve an identity mapping by default. If the learned transformation contributes little, the layer effectively becomes transparent.

Layers can opt out.

Gradient Flow Benefits

Residual connections:

- reduce gradient attenuation

- provide direct gradient paths

- make optimization less sensitive to depth and initialization

Gradients find a path.

Residual Connections vs Skip Connections

- Residual connections typically involve additive identity shortcuts

- Skip connections is a broader term that includes concatenation, gating, or attention-based shortcuts

Residuals are a specific, additive case.

Relationship to Normalization

Residual connections interact strongly with normalization layers. The placement of normalization relative to the residual path (pre-norm vs post-norm) affects stability, training dynamics, and robustness.

Ordering shapes behavior.

Conceptual Role Across Architectures

Residual connections appear in:

- CNNs (ResNet)

- Transformers

- diffusion models

- graph neural networks

- deep reinforcement learning agents

Residual learning is architecture-agnostic.

Optimization Perspective

From an optimization view, residual connections:

- flatten loss landscapes

- reduce pathological curvature

- make deep models behave like ensembles of shallow paths

Optimization becomes smoother.

Limitations

Residual connections do not:

- guarantee better generalization

- replace thoughtful architecture design

- solve global reasoning limitations

- eliminate the need for data and evaluation rigor

Stability is not sufficiency.

Common Pitfalls

- adding residuals without purpose

- ignoring dimensional alignment

- misplacing normalization layers

- assuming residuals prevent overfitting

- over-deepening architectures unnecessarily

Residuals enable depth—but do not justify it.

Summary Characteristics

| Aspect | Residual Connections |

|---|---|

| Core function | Signal preservation |

| Gradient effect | Strong stabilization |

| Learning target | Residual function |

| Architectural scope | Universal |

| Risk | Encouraging unnecessary depth |

Related Concepts

- Architecture & Representation

- Residual Networks (ResNet)

- Optimization Stability

- Vanishing Gradients

- Normalization Layers

- Pre-Norm vs Post-Norm Architectures

- Deep Learning Architectures