Short Definition

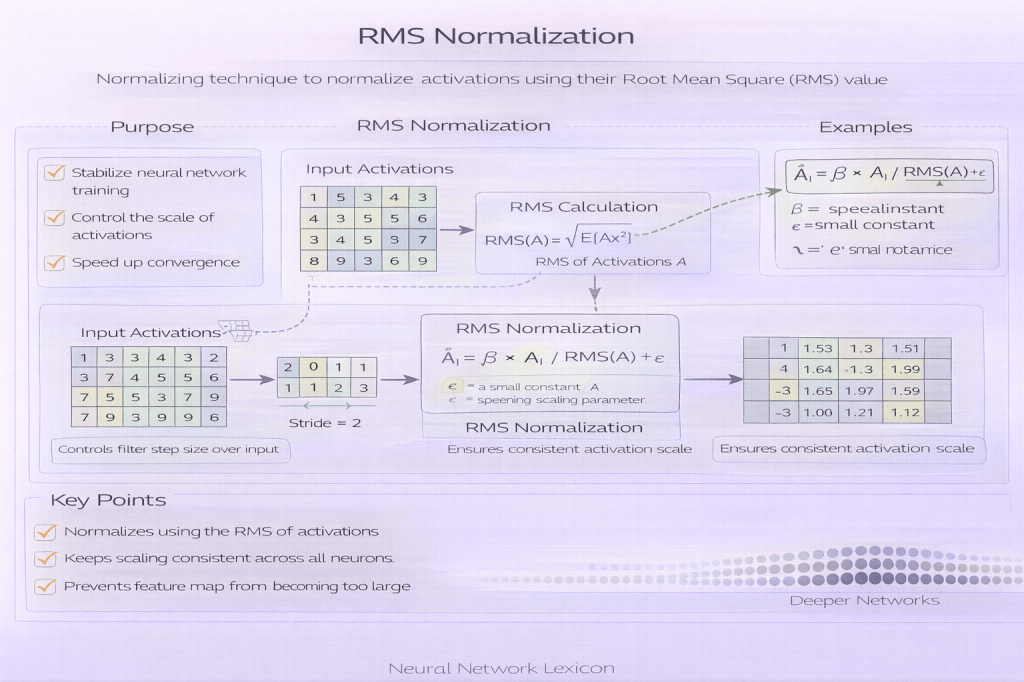

RMS normalization normalizes activations using their root-mean-square without centering them.

Definition

RMS normalization (Root Mean Square Normalization) is a normalization technique that scales activations based on their root-mean-square value across a feature dimension, without subtracting the mean. Unlike batch normalization and layer normalization, RMS normalization does not perform mean centering and typically omits additive bias parameters.

RMS normalization normalizes scale, not location.

Why It Matters

Mean centering is not always necessary for stable optimization. RMS normalization simplifies normalization by focusing solely on controlling activation magnitude, reducing computational overhead while preserving most of the stability benefits of layer normalization.

It is widely used in modern large-scale language models.

How RMS Normalization Works

For a feature vector ( x = (x_1, \dots, x_d) ):

- Compute the root-mean-square:

[ \text{RMS}(x) = \sqrt{\frac{1}{d} \sum_{i=1}^d x_i^2} ] - Normalize activations by dividing by the RMS

- Apply a learned scale parameter

No mean subtraction is performed.

Minimal Conceptual Formula

RMSNorm(x) = γ · x / RMS(x)RMS Normalization vs Layer Normalization

- RMS Normalization

- no mean subtraction

- fewer computations

- slightly less expressive

- deterministic and batch-independent

- common in LLMs

- Layer Normalization

- mean and variance normalization

- more expressive

- slightly higher computational cost

RMS normalization trades expressiveness for simplicity and efficiency.

Where RMS Normalization Is Used

RMS normalization is commonly used in:

- large language models

- transformer-based architectures

- autoregressive sequence models

- settings where efficiency and stability are critical

Many modern transformer variants rely on RMSNorm.

Relationship to Optimization Stability

RMS normalization stabilizes optimization by controlling activation scale, which helps prevent exploding gradients and reduces sensitivity to learning rate. While it does not correct mean drift, empirical results show this is often unnecessary in transformer-style architectures.

Stability can be achieved without centering.

Interaction with Residual Connections

RMS normalization is frequently paired with residual connections in pre-norm transformer blocks. The combination provides stable gradient flow while keeping computation minimal.

This pairing is common in very deep models.

Effects on Generalization

RMS normalization primarily improves optimization efficiency. Its effects on generalization are indirect and architecture-dependent, usually mediated through smoother training and better convergence.

It is not a regularizer by design.

Computational Characteristics

- fewer operations than layer normalization

- no dependence on batch statistics

- consistent behavior across training and inference

- well-suited for large-scale distributed training

Efficiency is a core motivation.

Common Pitfalls

- assuming RMSNorm is a drop-in replacement everywhere

- ignoring mean drift in architectures where it matters

- mixing RMSNorm with batch-dependent layers inconsistently

- misunderstanding its reduced expressiveness

- omitting normalization placement details in reporting

Normalization choice is architectural, not interchangeable.

Relationship to Other Normalization Methods

RMS normalization contrasts with:

- batch normalization (batch-dependent)

- layer normalization (mean + variance)

- group normalization (channel groups)

- instance normalization (per-channel)

Each normalization encodes different assumptions.

Related Concepts

- Architecture & Representation

- Normalization Layers

- Layer Normalization

- Batch Normalization

- Residual Connections

- Optimization Stability

- Transformers