Short Definition

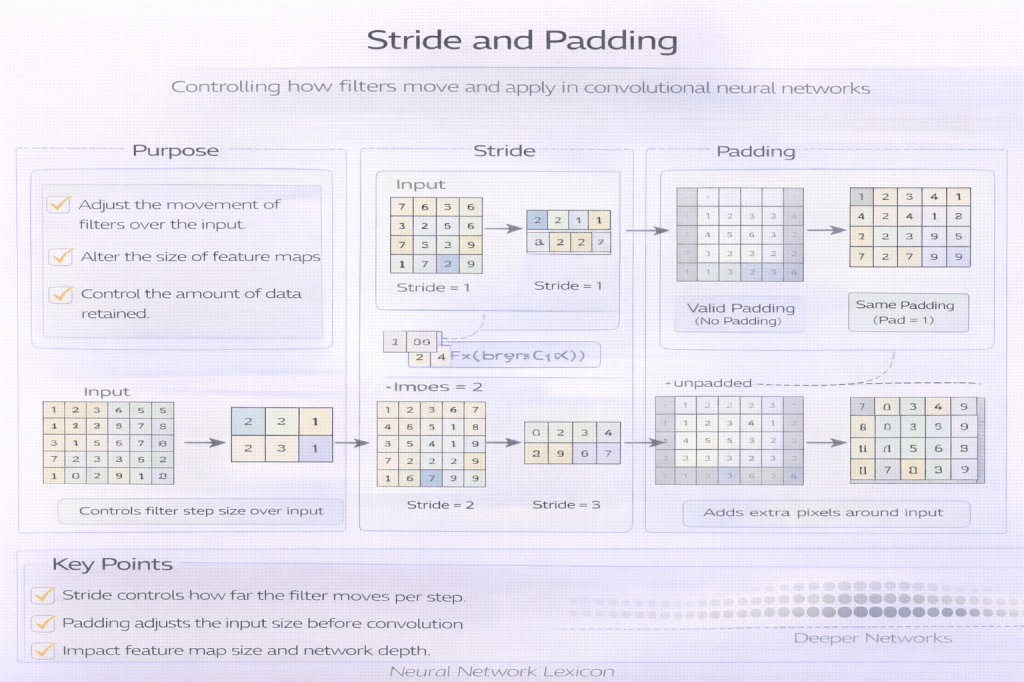

Stride controls how far a convolutional or pooling window moves across the input, while padding controls how input boundaries are handled during the operation.

Definition

Stride specifies the step size with which a kernel is applied across an input tensor, determining the degree of downsampling.

Padding specifies whether—and how—additional values are added around the input borders to control output size and boundary effects.

Stride sets resolution; padding sets coverage.

Why It Matters

Stride and padding jointly determine:

- output spatial dimensions

- information retention vs downsampling

- effective receptive field growth

- boundary bias and edge behavior

- computational cost

Small choices have large architectural consequences.

Stride

What Stride Does

Stride defines how many input units the kernel skips between applications.

- stride = 1: dense coverage

- stride > 1: downsampling

Stride compresses space by skipping positions.

Effects of Increasing Stride

Increasing stride:

- reduces output resolution

- increases effective receptive field faster

- lowers computation

- risks losing fine-grained information

Downsampling trades detail for efficiency.

Padding

What Padding Does

Padding adds values (typically zeros) around the input border before applying the operation. It controls how kernels interact with edges.

Padding protects boundaries.

Common Padding Types

- Valid padding: no padding; output shrinks

- Same padding: output spatial size preserved

- Zero padding: pads with zeros (most common)

- Reflect / replicate padding: mirrors or repeats edge values

Padding encodes boundary assumptions.

Minimal Conceptual Illustration

Input + Padding → Sliding Kernel (Stride s) → Output

Output Size Intuition

For a 1D illustration:

Output size ≈ (Input + 2×Padding − Kernel) / Stride + 1Stride and padding jointly define geometry.

Relationship to Receptive Fields

- Larger stride increases receptive field growth per layer

- Padding preserves alignment and coverage at edges

- Excessive stride can create blind spots

Context grows faster with stride—but less precisely.

Stride vs Pooling

Stride and pooling both downsample, but:

- stride is learnable when used in convolutions

- pooling is fixed and non-learnable

Modern architectures often prefer strided convolutions.

Boundary Effects and Bias

Without padding:

- edge pixels contribute less

- border information is underrepresented

Padding mitigates edge bias at the cost of artificial values.

Stride and Padding in Modern Architectures

Modern CNNs and hybrids:

- use stride early for efficient downsampling

- apply padding to preserve alignment

- rely on careful scheduling of resolution changes

- combine with normalization and residual connections

Geometry is designed, not incidental.

Common Pitfalls

- excessive early downsampling

- ignoring boundary bias

- mixing stride and pooling redundantly

- assuming padding is neutral

- misaligning feature maps across skip connections

Spatial design errors compound.

Summary Characteristics

| Aspect | Stride | Padding |

|---|---|---|

| Primary role | Downsampling | Boundary handling |

| Learnable | Indirectly | No |

| Affects resolution | Yes | Indirectly |

| Risk | Information loss | Boundary artifacts |

Related Concepts

- Architecture & Representation

- Convolution Operation

- Pooling Layers

- Receptive Fields

- Feature Maps

- Dilated Convolutions

- Residual Networks (ResNet)

- Vision Architectures