Short Definition

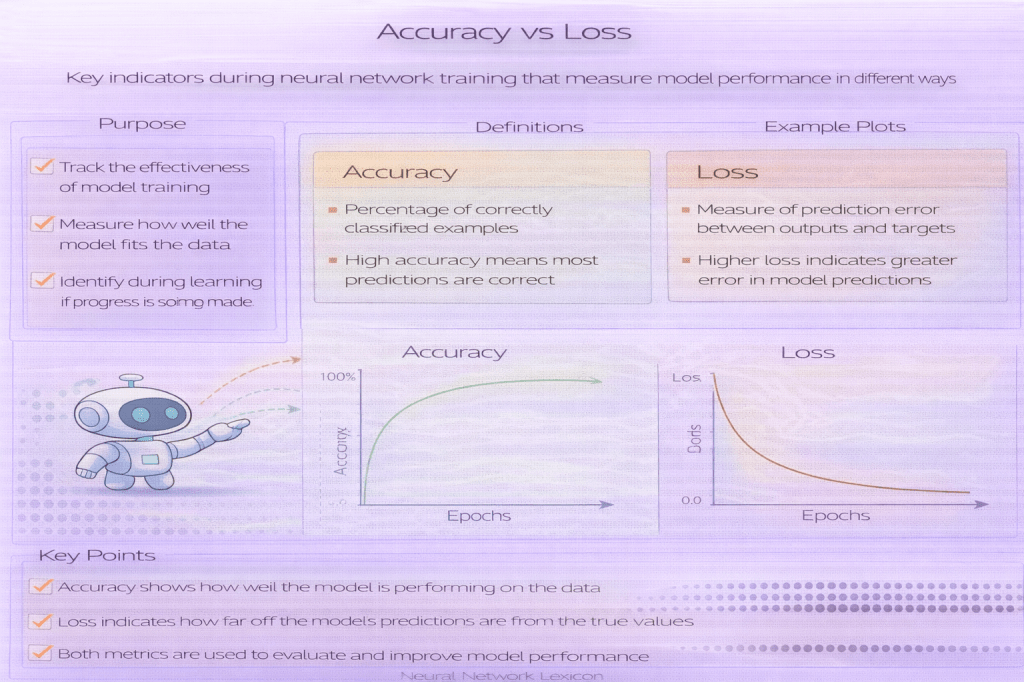

Accuracy measures correctness; loss measures error magnitude.

Definition

Accuracy indicates how often predictions are correct, while loss quantifies how wrong predictions are. Loss functions provide smooth signals for optimization, whereas accuracy is often non-differentiable and unsuitable for training.

Both metrics are necessary but serve different purposes.

Why It Matters

Optimizing accuracy directly usually fails during training.

How It Works (Conceptually)

- Loss guides parameter updates

- Accuracy evaluates results

- Metrics complement each other

Minimal Python Example

Python

accuracy = correct / totalCommon Pitfalls

- Using accuracy as a loss function

- Ignoring loss trends

- Misinterpreting evaluation metrics

Related Concepts

- Loss Function

- Evaluation Metrics

- Training Monitoring