Short Definition

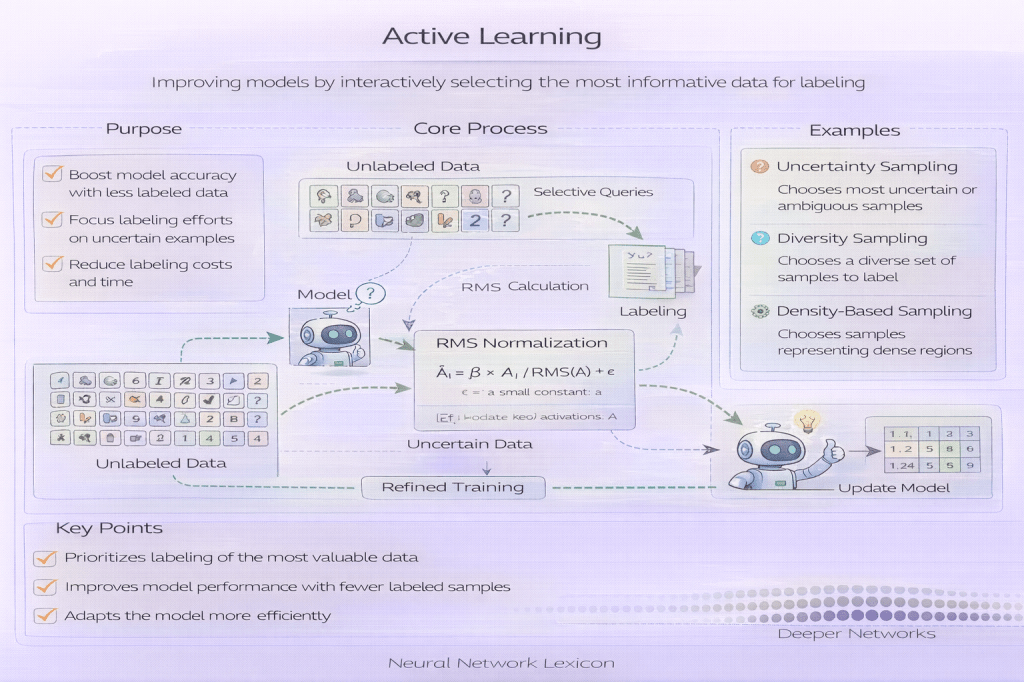

Active learning is a training paradigm where the model selectively chooses which data points to label.

Definition

Active learning is a machine learning approach in which the model (or an acquisition strategy) actively identifies unlabeled samples whose labels would be most valuable for improving performance. Instead of labeling data uniformly at random, active learning focuses labeling effort on informative, uncertain, or representative examples.

Active learning optimizes label efficiency, not raw data volume.

Why It Matters

Labeling data can be expensive, slow, or limited by expert availability. Many datasets contain large amounts of redundant information. Active learning reduces labeling cost while achieving comparable or better performance with fewer labeled samples.

It is especially effective when labels are scarce or costly.

How Active Learning Works

A typical active learning loop:

- Train an initial model on a small labeled dataset

- Apply the model to an unlabeled data pool

- Score samples using an acquisition function

- Select a subset of samples for labeling

- Add newly labeled data to the training set

- Retrain the model and repeat

The model improves as labeling focuses on hard cases.

Common Active Learning Strategies

Common acquisition strategies include:

- Uncertainty sampling: select low-confidence predictions

- Margin sampling: select samples near decision boundaries

- Entropy-based sampling: select high-entropy outputs

- Query-by-committee: select samples with model disagreement

- Diversity-based sampling: avoid redundant samples

- Hybrid strategies: combine uncertainty and diversity

Strategy choice affects bias and convergence.

Minimal Conceptual Example

# conceptual active learning stepscores = acquisition(model, unlabeled_pool)to_label = select_top_k(scores)

Active Learning vs Active Sampling

- Active sampling: prioritizes samples during training

- Active learning: prioritizes samples for labeling

Active learning includes a human-in-the-loop component.

Benefits of Active Learning

Benefits include:

- reduced labeling cost

- faster performance gains

- focused learning on decision boundaries

- improved efficiency under class imbalance

Label efficiency is the primary objective.

Risks and Limitations

Active learning can introduce:

- sampling bias driven by early model errors

- poor coverage of the full data distribution

- overfitting to ambiguous or noisy samples

- operational complexity from labeling workflows

Safeguards and diversity constraints are important.

Relationship to Class Imbalance and Rare Events

Active learning can help discover rare or minority-class examples by targeting uncertainty or disagreement. However, naive strategies may repeatedly query ambiguous negatives instead of true rare positives.

Acquisition criteria must reflect task goals.

Relationship to Generalization

Active learning improves sample efficiency but can distort the effective training distribution. Generalization should be evaluated on representative, passively sampled test data.

Relationship to Evaluation Protocols

Evaluation protocols must remain independent of active learning decisions. Using actively selected samples for evaluation introduces bias and invalidates performance estimates.

Common Pitfalls

- evaluating on actively sampled data

- ignoring label noise in queried samples

- failing to monitor sampling bias

- assuming active learning always outperforms random sampling

- neglecting human annotation constraints

Active learning is powerful but not automatic.

Related Concepts

- Data & Distribution

- Active Sampling

- Sampling Strategies

- Importance Sampling

- Curriculum Learning

- Class Imbalance

- Rare Event Detection

- Evaluation Protocols