Short Definition

Active sampling is the selective choice of data points to label or prioritize based on their expected value to learning.

Definition

Active sampling is a data selection strategy in which the model (or an acquisition rule) actively decides which samples should be labeled, collected, or emphasized next. Rather than relying on random or passive sampling, active sampling focuses resources on the most informative, uncertain, or impactful data points.

Active sampling optimizes which data is seen next.

Why It Matters

Labeling data is often expensive, slow, or limited. Many datasets contain redundant or low-information samples that contribute little to model improvement. Active sampling reduces labeling cost while accelerating learning by concentrating effort where it matters most.

It is especially valuable under data scarcity or class imbalance.

How Active Sampling Works

A typical active sampling loop:

- Train a model on existing labeled data

- Evaluate unlabeled or weakly labeled samples

- Score samples using an acquisition function

- Select top-ranked samples for labeling or emphasis

- Retrain the model with the newly acquired data

Sampling decisions evolve as the model improves.

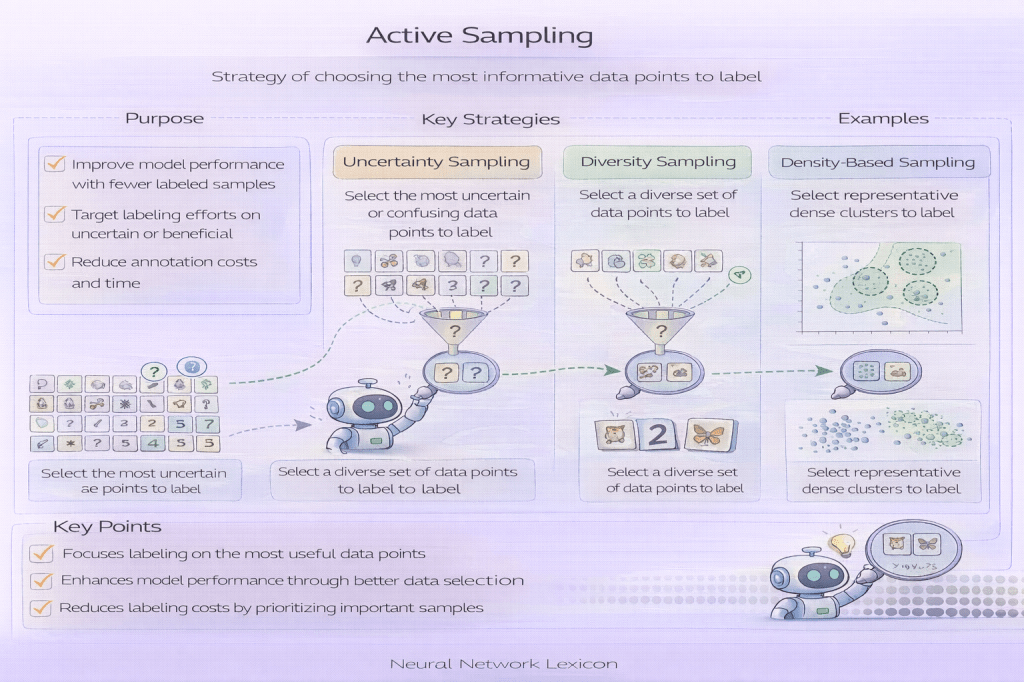

Common Active Sampling Strategies

Frequently used strategies include:

- Uncertainty-based sampling: select samples with low confidence

- Margin sampling: select samples near decision boundaries

- Entropy-based sampling: select high-entropy predictions

- Diversity-based sampling: avoid redundant samples

- Hybrid strategies: combine uncertainty and diversity

Each strategy reflects a different notion of “informativeness.”

Minimal Conceptual Example

# conceptual active samplingscores = acquisition_function(model, unlabeled_pool) selected = select_top_k(scores)

Active Sampling vs Random Sampling

- Random sampling: unbiased but inefficient

- Active sampling: efficient but biased by current model state

Active sampling trades exploration simplicity for learning efficiency.

Risks and Limitations

Active sampling can introduce:

- sampling bias toward model blind spots

- reduced coverage of the full data distribution

- reinforcement of early modeling errors

- instability if acquisition criteria are poorly chosen

Safeguards and diversity constraints are important.

Relationship to Class Imbalance and Rare Events

Active sampling can significantly improve learning on rare or minority classes by intentionally seeking informative positive examples. However, careless strategies may oversample ambiguous noise instead of meaningful rare events.

Relationship to Generalization

While active sampling improves sample efficiency, it can distort the effective training distribution. Care must be taken to ensure that gains in learning speed do not degrade generalization or calibration.

Related Concepts

- Data & Distribution

- Sampling Strategies

- Importance Sampling

- Class Imbalance

- Rare Event Detection

- Curriculum Learning

- Active Learning