Short Definition

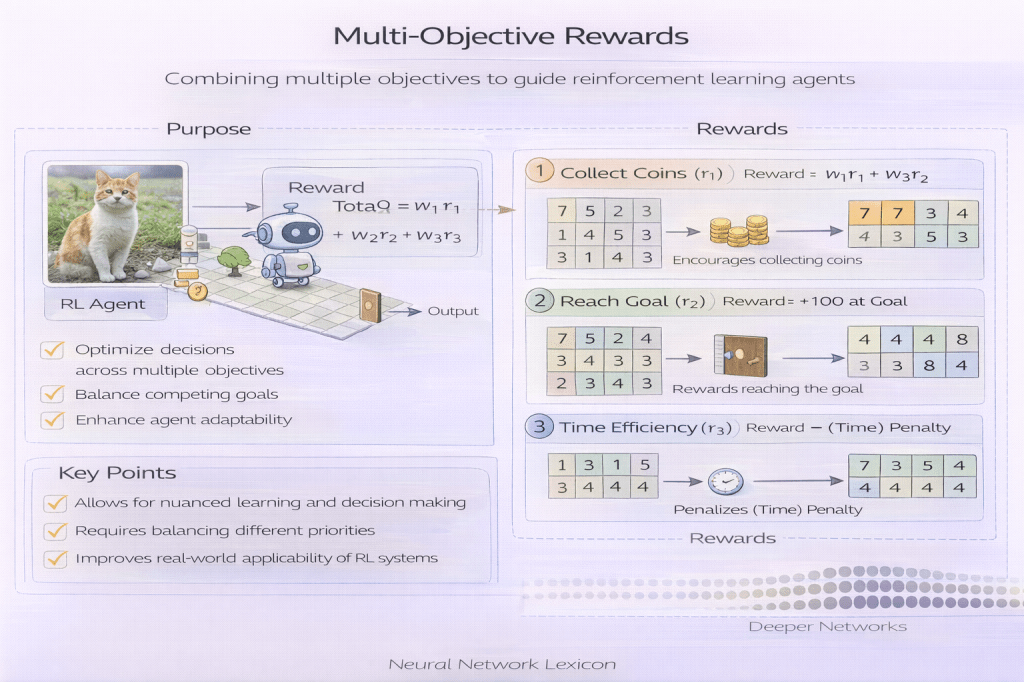

Multi-objective rewards encode multiple goals, constraints, or trade-offs into the learning signal of a model or policy.

Definition

Multi-objective rewards are reward formulations that represent more than one objective simultaneously—such as performance, cost, safety, fairness, or latency—rather than collapsing all goals into a single scalar reward. They acknowledge that real-world systems must balance competing priorities.

Real objectives are plural.

Why It Matters

Single-objective rewards oversimplify reality and often lead to reward hacking, metric gaming, or unsafe behavior. Multi-objective rewards make trade-offs explicit and allow learning systems to optimize within realistic constraints.

One reward rarely captures all values.

Common Objectives Combined in Practice

Multi-objective rewards may include:

- predictive performance

- decision cost or utility

- risk or safety penalties

- fairness or group constraints

- uncertainty or abstention penalties

- operational constraints (latency, budget)

Objectives reflect values and limits.

Minimal Conceptual Illustration

Reward = f(Performance, Cost, Risk, Fairness, Constraints)

Design Approaches

Weighted Sum

Combine objectives into a single scalar using weights.

- simple and common

- sensitive to weight choice

- hides trade-offs

Lexicographic Ordering

Prioritize objectives hierarchically.

- enforces hard constraints

- inflexible under change

Constraint-Based Rewards

Optimize a primary reward subject to constraints.

- aligns with governance and regulation

- requires constraint monitoring

Vector-Valued Rewards

Maintain separate reward components.

- preserves trade-offs

- complicates optimization and evaluation

Design choice shapes behavior.

Relationship to Multi-Metric Optimization

Multi-objective rewards are the learning-time counterpart to multi-metric evaluation. Rewards guide optimization; metrics assess outcomes. Conflating the two increases gaming risk.

Train with care; evaluate independently.

Relationship to Decision Cost Functions

Decision cost functions can be seen as a principled form of multi-objective reward, where different outcomes incur different costs or utilities. Explicit cost modeling clarifies trade-offs.

Costs unify objectives.

Interaction with Exploration

Multi-objective rewards influence exploration behavior by shaping uncertainty trade-offs. Poorly balanced rewards may discourage exploration in safety-critical or long-term objectives.

Exploration depends on incentives.

Risks and Failure Modes

- reward hacking across objectives

- unstable learning due to conflicting gradients

- hidden prioritization via weights

- difficulty interpreting learned behavior

- sensitivity to distribution shift

More objectives, more complexity.

Governance Considerations

Multi-objective rewards require:

- explicit documentation of objectives

- justification of trade-offs

- periodic review and adjustment

- alignment with evaluation governance

- long-term outcome auditing

Reward design is a governance decision.

Common Pitfalls

- choosing arbitrary weights without justification

- collapsing objectives prematurely

- optimizing convenience over correctness

- failing to revisit objectives as context changes

- assuming multi-objective rewards eliminate Goodhart effects

Plural rewards still need oversight.

Summary Characteristics

| Aspect | Multi-Objective Rewards |

|---|---|

| Objectives | Multiple |

| Trade-offs | Explicit |

| Learning complexity | Higher |

| Gaming risk | Reduced but present |

| Governance need | High |

Related Concepts

- Generalization & Evaluation

- Reward Design

- Multi-Metric Optimization

- Decision Cost Functions

- Goodhart’s Law (ML Context)

- Reward Hacking

- Outcome-Aware Evaluation

- Evaluation Governance