Short Definition

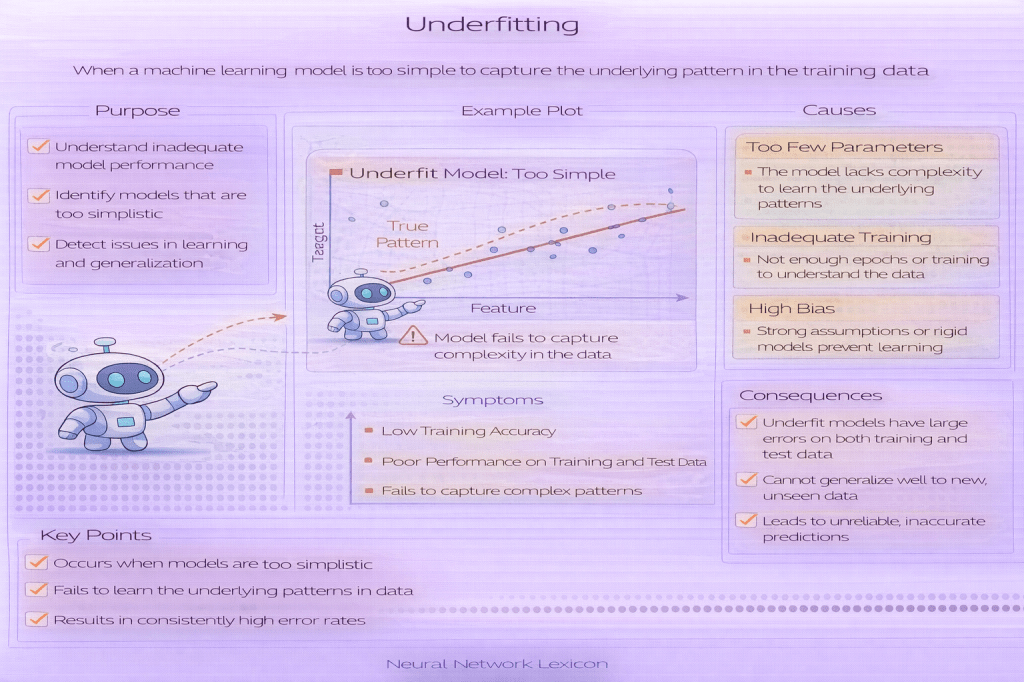

Underfitting occurs when a model is too simple to capture meaningful patterns in the data.

Definition

Underfitting is a failure mode in which a neural network fails to learn the underlying structure of the data. The model performs poorly not only on unseen data but also on the training data itself. This typically happens when the model has insufficient capacity, is trained for too few steps, or is constrained by overly strong regularization.

An underfitted model has high bias and low variance.

Why It Matters

Underfitting means the model has not learned enough to be useful. While it is often less dangerous than overfitting, it results in wasted data, wasted training time, and misleading conclusions about model or data quality.

Understanding underfitting is essential for diagnosing whether poor performance is due to:

- model simplicity

- training procedure

- data representation issues

How It Works (Conceptually)

- The model’s hypothesis space is too limited

- Learned patterns are overly simplistic

- Training loss remains high

- Validation loss is also high and close to training loss

Underfitting indicates that the model cannot represent the target function adequately.

Minimal Python Example

train_accuracy = 0.55val_accuracy = 0.54 # both low → underfittingCommon Pitfalls

- Assuming more data will fix underfitting

- Using overly simple models for complex tasks

- Stopping training too early

- Applying excessive regularization

- Confusing underfitting with noisy data

Related Concepts

- Generalization

- Overfitting

- Bias–Variance Tradeoff

- Model Capacity

- Regularization

- Training Dynamics