Short Definition

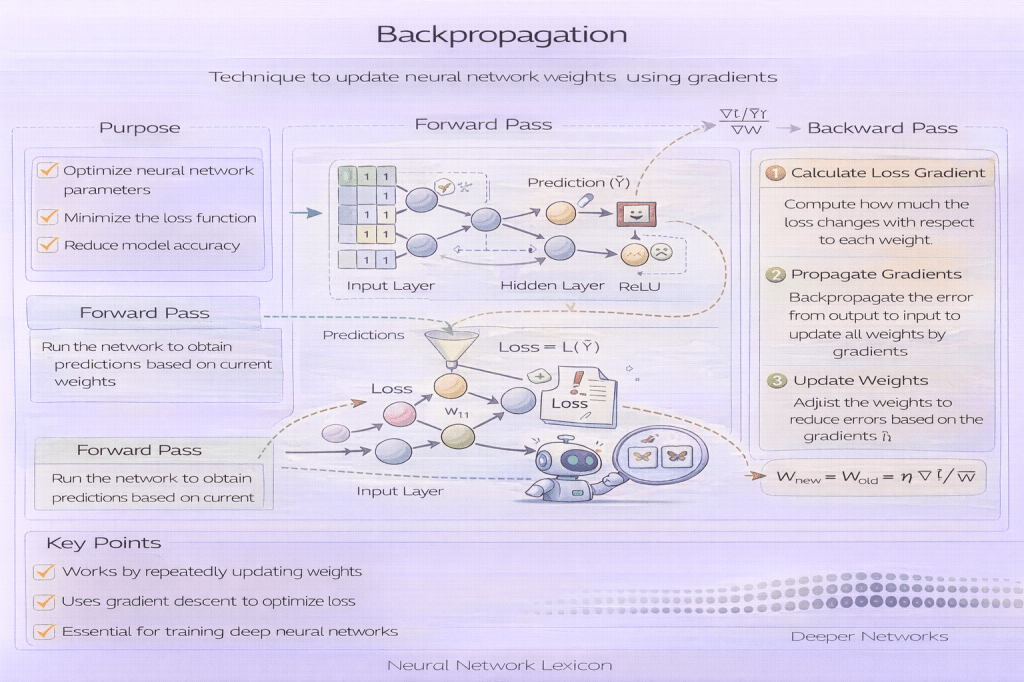

Backpropagation computes gradients needed to train a neural network.

Definition

Backpropagation is an algorithm that determines how each weight and bias contributes to the loss. It works by propagating error information backward through the network using the chain rule.

This process enables efficient training of deep networks by systematically computing gradients for all parameters.

Why It Matters

Without backpropagation, neural networks cannot learn from data.

How It Works (Conceptually)

- Compute loss

- Apply chain rule

- Calculate gradients

- Update parameters

Minimal Python Example

gradient = 2 * (prediction - target)Common Pitfalls

- Treating it as a black box

- Mixing math notation with code

- Forgetting gradient direction

Related Concepts

- Chain Rule

- Gradients

- Optimization